Biography

I am a Ph.D. student at the Department of Biomedical Engineering, Rensselaer Polytechnic Institute, USA, co-advised by Prof. Ge Wang and Prof. Pingkun Yan at the Biomedical Imaging Center. My current research focuses on medical imaging and multimodal analytical frameworks that jointly leverage CT imaging, genetic data, and clinical variables to improve CVD risk prediction. Previously, I obtained my B.Sc. in Physics from the University of Science and Technology of China. In my undergraduate studies, I focused on wavefront modulation methods using deep learning, under the supervision of Prof. Xiaoye Xu and Prof. Zhiwei Xiong.

Prof. Ge Wang’s Lab: AI-based X-ray Imaging System (AXIS) lab

Prof. Pingkun Yan’s Lab: Deep Imaging Analytics Lab (DIAL)

- Medical physics

- Medical imaging

- Medical image analysis

- Knowledge learning

- Artifical intelligence

Ph.D. Student in Biomedical Engineering, 2022-present

Rensselaer Polytechnic Institute

B.Sc. in Physics, 2022

University of Science and Technology of China

Featured Publications

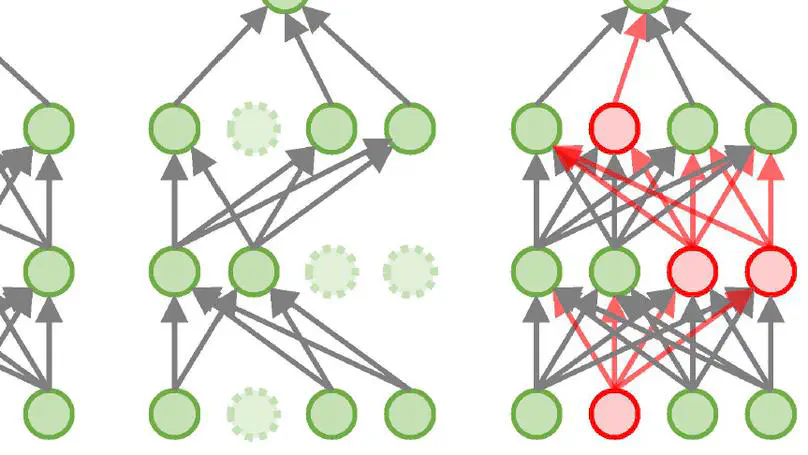

Flipover, an enhanced dropout technique, is introduced to improve the robustness of artificial neural networks. In contrast to dropout, which involves randomly removing certain neurons and their connections, flipover randomly selects neurons and reverts their outputs using a negative multiplier during training. This approach offers stronger regularization than conventional dropout, refining model performance by (1) mitigating overfitting, matching or even exceeding the efficacy of dropout; (2) amplifying robustness to noise; and (3) enhancing resilience against adversarial attacks. Extensive experiments across various neural networks affirm the effectiveness of flipover in deep learning.

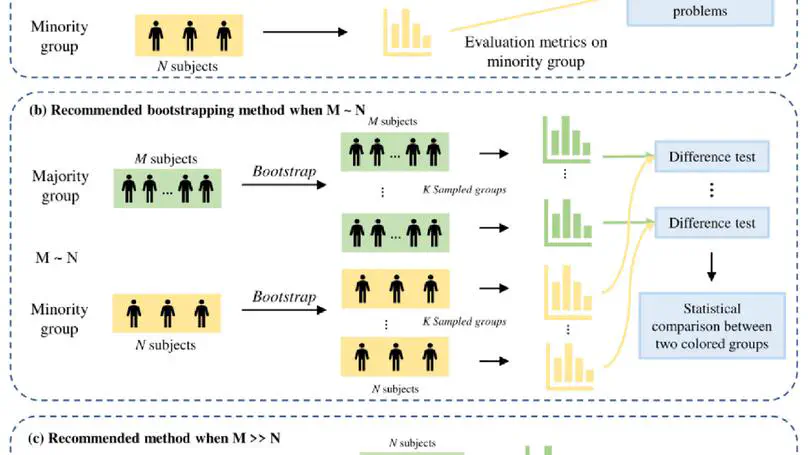

Fairness of artificial intelligence and machine learning models, often caused by imbalanced datasets, has long been a concern. While many efforts aim to minimize model bias, this study suggests that traditional fairness evaluation methods may be biased, highlighting the need for a proper evaluation scheme with multiple evaluation metrics due to varying results under different criteria. Moreover, the limited data size of minority groups introduces significant data uncertainty, which can undermine the judgement of fairness. This paper introduces an innovative evaluation approach that estimates data uncertainty in minority groups through bootstrapping from majority groups for a more objective statistical assessment. Extensive experiments reveal that traditional evaluation methods might have drawn inaccurate conclusions about model fairness. The proposed method delivers an unbiased fairness assessment by adeptly addressing the inherent complications of model evaluation on imbalanced datasets. The results show that such comprehensive evaluation can provide more confidence when adopting those models.

Publications

Contact

Please leave a message if you have any questions.

- liangy15@rpi.edu

- 110 8th Street, Troy, NY 12180

- 4231 Biotechnology and Interdisciplinary Studies Building